Decoding Science 016: How Particles Learned to Build a Time Crystal, Legibility of AI in Science, 3D-printed Filaments, and Robots Doing Parkour

Welcome to Decoding Science: every other week our writing collective highlight notable news—from the latest scientific papers to the latest funding rounds in AI for Science —and everything in between. All in one place.

What we read

The Legibility Problem [Matthew Carter, Asimov Press, March 2026]

Carter, a computational biologist, argues that the central challenge of AI-driven science will not be safety or mechanistic interpretability but legibility: the question of whether humans can understand and act on what autonomous AI systems discover. For example, chess engines went from narrowly beating Kasparov (1997) to centaur partnerships (of human + machine being more productive than either alone) to making human contribution irrelevant after AlphaZero taught itself the game in four hours (2017). He expects AI-for-science to follow the same arc, citing Richard Sutton’s “Bitter Lesson” or the idea that systems exploiting raw computation, after a point, have consistently outperformed those encoding human intuition.

The chess analogy does not map on to the realities of AI for science one-for-one though: a chess engine operates within fixed rules, whereas an AI scientist would have a much more fluid scope. Drawing on Kuhn, he argues that AI could compress paradigm shifts that historically took generations into months, producing knowledge framed in terms humans don’t share. “If AI science does achieve superhuman performance, and if AI systems begin forming their own research communities around concepts that mutate faster than we can track, then the work of human scientists will shift from that of creation to that of excavation.”

The concern here is that discoveries could get “stranded” because of the sheer scale of their generation. In this scenario, many ideas just don’t get implemented, because no human institution can parse them into therapies, materials, or policies. Carter’s proposed response is to build the necessary infrastructure – repositories for AI-generated findings, dedicated systems for translating AI science into human-legible terms, and tools for tracking conceptual divergence between AI and human research communities.

What we read

Rotational 3D printing of active-passive filaments and lattices with programmable shape morphing [Abdelrahman M.K. et al., arXiv, Mar. 2026]

We spend so much time talking about intelligence as if it lives only in software, but this paper is a reminder that intelligence can also be built into the mechanics of the physical world.

Operating at the pinnacle of complex multi-dimensional printing, innovative materials engineering, and hierarchical integration, the Lewis Lab steps up the soft robotics game once more. Taking inspiration from the natural dexterity of octopus tentacles, the strength and sensitivity of an elephant trunk, and the strong yet flexible nature of vine plants curving up a wall, they create shape-morphing filaments that weave together to form complex, programmable, 3D matter.

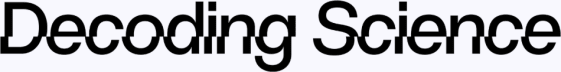

What makes this work so exciting is that it goes beyond the yet-another soft robotic body. By treating fabrication itself as the programming layer, they are able to print a Janus-like filament, where one side consists of a passive elastomeric ink whilst the other, active ink side, contains responsive elements (Figure A-B). Two dimensions of design are critically manipulated: materials, and manufacturing. As material, a liquid crystal elastomer (LCE) is chosen. Because liquid crystals respond to heat, they can be embedded inside a matrix that supports flexible shape-changing. This introduces the active element of the half-half structure.

Figure 1 | A Diagram of how the composite filament was 3D printed. B 3D composite printing, in action. C Influence of changing the interfacial twist angle (*) on filament architecture. D Outer kink printed, and E inner kink printed filament, causing in F an expanding behaviour when heated, and G a contracting form when heated. H Three-layer expanding lattice, and I contracting lattice. J 3D designed view with predicted conforming architecture, K validated experimentally. L 3D designed contracting architecture, M validated experimentally.

The second design vector over which the concept is optimised is that of manufacturing. Using rotational multimaterial 3D printing (RM-3DP) the team can combine the active LCE elastomer with a passive support elastomer, changing the properties of the overall composite filament. But beyond the ability to co-print two materials, the real innovation lies in the ability to influence the pattern of this filament: it can be straight (Figure C, *=0.00), or twisted (Figure C, *=2.00). By influencing the structure of the material at a macro scale, this further alters the downstream behaviour a filament can have when heat is applied. As shown in the figure below, a filament with lower interfacial twist (*) will have less bend; contrarily, a filament with higher twist will be able to also rotate with a greater degree. Consequently, a system can morph: from a single filament, into a complex heat-responsive twist-and-curve structure.

But the innovation does not stop there. Introducing a sudden kink in the filament (Figure D-G) allows creating two systems architectures: 1. a filter, and 2. a pick-and-place mesh. In the former, heat expands the 3D-printed mesh to allow larger particles to go through; in the latter inverted kink form, heat contracts the mesh and particles can be grasped and moved into a lattice spot. Ultimately, the bigger idea here is the shift from a programmable filament into a complex lattice.

Zooming out, this work demonstrates potential for a much larger variety of composites where an active-passive filament structure influences the morphability of a material; in an even greater expansion of options, the passive side too could be substituted for active materials, allowing the gripper to respond to - potentially - electricity. And then we are truly talking: a soft robotic material that can be controlled by electric pulses is one step closer to mimicking the natural properties of flexible, strong, versatile, and intelligent grippers. Ultimately, this paper demonstrates intelligence does not only live in code. Sometimes it lives in how we design matter to move.

Perceptive Humanoid Parkour: Chaining Dynamic Human Skills via Motion Matching [Wu, Huang et al., arXiv, Feb 2026]

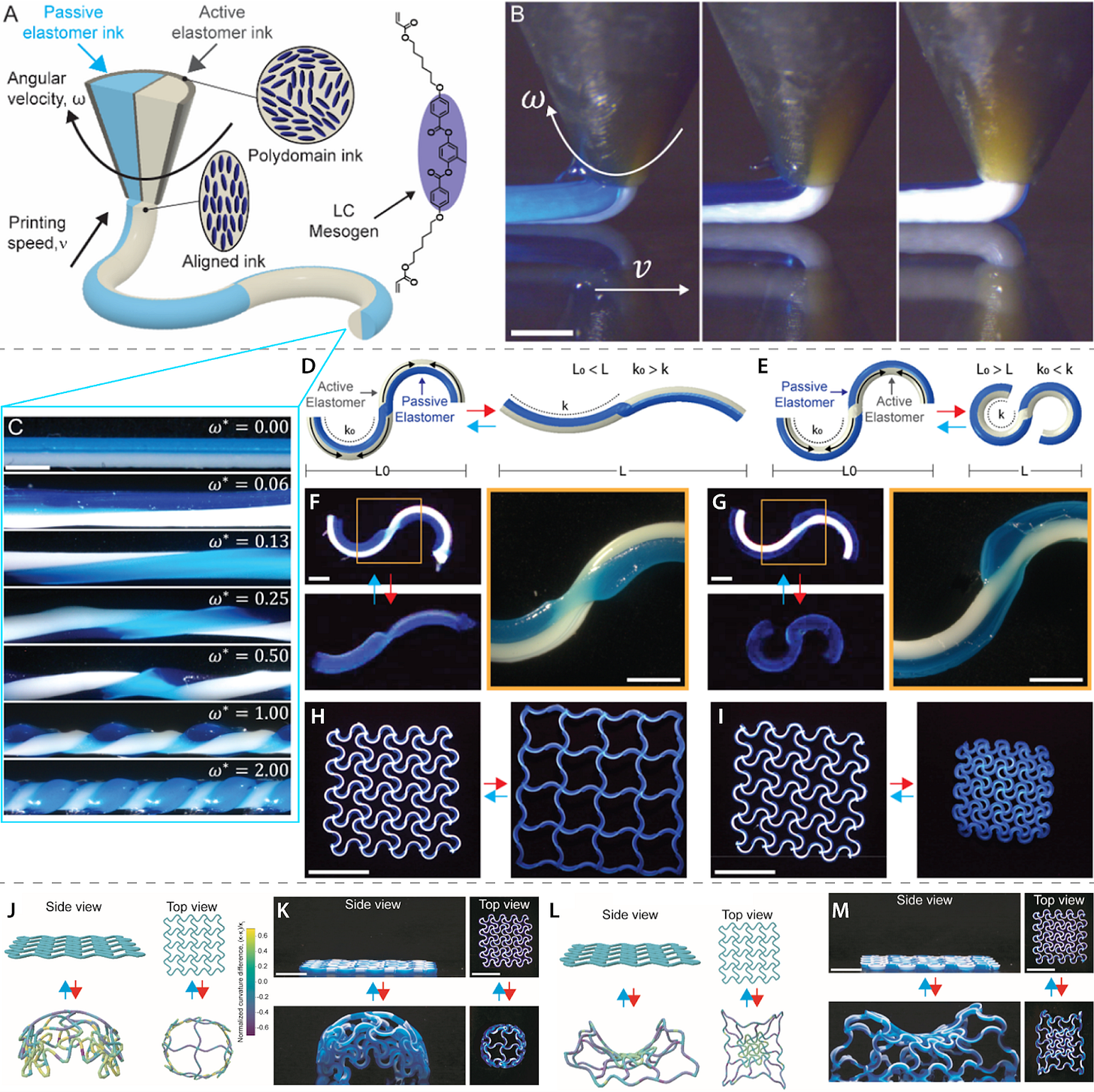

A couple months ago at the CCTV Spring Festival Gala celebrating Chinese New Year, the world watched in amazement as teams of Unitree G1 units performed intricate martial arts routines, moving in perfect unison. While the visual effect was striking, as a demonstration of robot autonomy it was less dramatic: this was a carefully choreographed and pre-planned display which had been practised for months. In order to realise the future of dark warehouses and live-in humanoids doing our laundry, robots need to be able to assess, understand and interact with new and changing environments just as seamlessly as that choreographed display - something they are not capable of doing just yet. This collaborative paper from Amazon Frontier AI & Robotics (FAR) and UC Berkeley takes a step in that direction, presenting “Perceptive Humanoid Parkour,” (PHP) a framework that enables a Unitree G1 robot to autonomously navigate complex, multi-obstacle environments using onboard perception. Parkour was chosen as the benchmark of motion as it requires the combination of several relevant real-world skills: highly dynamic and contact-rich movement, the perception of external stimuli to inform adaptation and rapid reactions, and the combination of these two capabilities into a single visuomotor policy, enabling smooth transitions between movements. It is one of the hardest test cases for long-horizon skill chaining because each obstacle demands a different skill, and the transition between them is where most systems fall apart.

The team approached this by first taking videos of real parkour athletes and breaking them down into reusable “atomic” skills. Motion matching - a process of nearest-neighbour searching in feature space that lets the system stitch individual elements together - was then used to compose the human movements within these skills into diverse long-horizon trajectories. The sensing set-up was minimal, each robot relying solely on a single onboard depth camera giving a sparse but computationally efficient 2D depth map of whatever is in front of it (essentially a grayscale image where pixel brightness represents distance).

When it came to training, single-skill teacher policies were trained with privileged information using RL-based motion tracking. Teachers could see the full state data about their environment - obstacle heights, positions etc. (data that a real-world deployed robot would not have access to). Multiple teacher policies were then distilled into a single depth-based, multi-skill single student policy based on the only input available in live deployment: raw depth images and proprioceptive data. To achieve this, an RL objective was added on top of the imitation signal alongside DAgger (Dataset Aggregation). This reward-based learning provided task-level corrective feedback and taught students to be outcome-oriented rather than simply motion copying, versus the normal imitation loss method of DAgger which could otherwise compound minor errors within the dynamic skills necessary for parkour. This approach enabled zero-shot sim-to-real transfer onto a humanoid robot, using onboard perception to adaptively move through complex and changing terrains - aka a robot that makes decisions entirely from what it sees, in real time.

In testing, PHP allowed robots to execute high-speed vaults, roll off platforms and climb walls up to 1.25m high (96% their own height). Researchers also tested the adaptability of robots mid-run, moving obstacles manually during a 60-second course.

The combination presented here of motion matching & RL & real-time depth perception into a single deployable policy demonstrates a meaningful step towards how robots might eventually be able to navigate the unstructured environments of warehouses and delivery routes in the future. The key advancement is the “long horizon” capability: chaining diverse, contact-rich skills smoothly and on the fly in a dynamic environment.

There is, of course, still a way to go. The obstacle courses here were a low-stakes, controlled environment and relatively predictable even with researchers moving blocks mid-run. A 60-second continuous course is not the same as general-purpose navigation around city streets. However, this and other recent papers represent a wider picture of the rate of progress across the whole field of embodied AI / humanoid robots. A year ago, Unitree robots at the Spring Festival Gala were shuffling around stiffly as they danced on the spot, now they’re vaulting obstacles at 3m/s. All eyes on what next year has in store (here’s to hoping we see the first Unitree entry into the Housekeeping Olympics).

Nonreciprocal Wave-Mediated Interactions Power a Classical Time Crystal [Morrell et al., Physical Review Letters, Feb 2026]

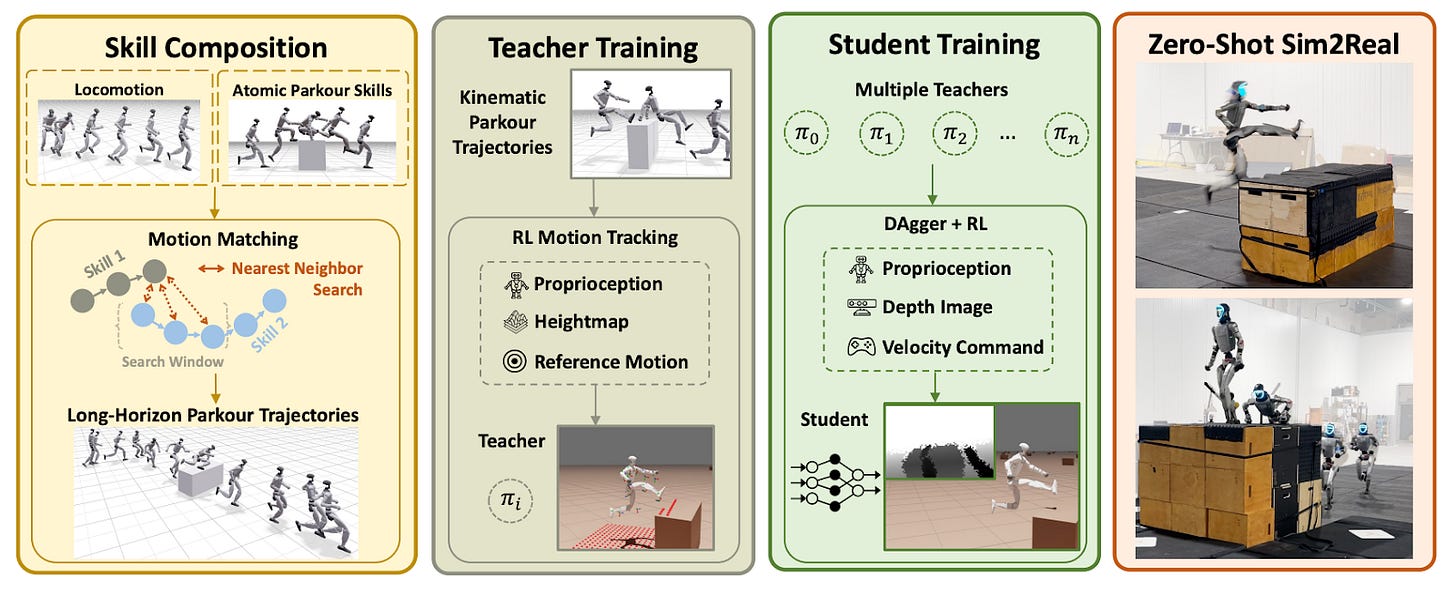

Scientists at NYU have discovered a new model of a time crystal, one which is astonishingly simple - a few particles suspended in a sound field which can organize themselves and keep moving in steady, repeating ways without being constantly driven. Instead of needing an external “push” to stay in motion, the particles interact through waves in a way that lets them draw in energy and convert it into collective behavior. What’s surprising is that this motion doesn’t come from the particles themselves, but from how they are arranged and how they interact.

In some cases, these interactions lead to continuous, self-sustained oscillations - essentially a system that keeps time on its own. In the simplest setup of just two particles, this becomes what’s called a classical time crystal: a stable rhythm that emerges naturally, rather than being imposed from outside. The exact frequency isn’t pre-set - it arises from the system itself. Small differences between particles also play an important role, helping stabilize these behaviors and allowing more complex dynamics in larger groups.

These findings point toward a different way of designing technology. Devices that can generate their own steady signals could become smaller and more energy-efficient, since they wouldn’t need constant external input. This could impact everything from electronics to medical devices. The same principles could also be used to build highly sensitive sensors, where stable, self-sustained motion makes it easier to detect tiny changes in the environment.

There are also implications for timekeeping, where naturally stable oscillations could provide reliable timing for systems like communications or navigation. More broadly, the work suggests a path toward self-organizing technologies—materials, robots, or systems that don’t need to be tightly controlled, but instead coordinate themselves through interactions. In that sense, it offers a new framework for building systems that are not just efficient, but also more autonomous and adaptive.

Community & other links

Phrase of the week: “Recursive self-improvement (RSI): AI systems are increasingly being used to build better AI systems, creating a feedback loop that could accelerate the very curves I showed you above”

Other Links:

“To design AI for disruptive science, we would need to understand what “rules” make one paradigm better than another, and build systems that optimize for these. This turns out to be a harder problem than scaling compute. The answer cannot simply be experimental success, since experiments are slow and do not always reliably distinguish between paradigms (as was the case with Lorentz and Einstein). And there are other plausible candidates, but none yet offer a sufficient formulation.” -- Djajadikerta, Alvin. “Designing AI for Disruptive Science.” Asimov Press (2026). DOI: 10.62211/29ej-27et

Google launches “Stitch”: Introducing “vibe design” with Stitch

NVIDIA and Global Robotics Leaders Take Physical AI to the Real World

The Rise of AI-Powered Robotics: How 2026 Is Reshaping Manufacturing and Automation

Field Trip

Did we miss anything? Would you like to contribute to Decoding Science by writing a guest post? Drop us a note here or chat with us on X.