Decoding Science 013: AI Self-Correcting Toward New Discoveries, Reinforcement Learning in Science, and Risks of Superintelligence by Anthropic CEO

Welcome to Decoding Science: every other week our writing collective highlight notable news—from the latest scientific papers to the latest funding rounds in AI for Science —and everything in between. All in one place.

We are still looking for new members. If you’re excited not just about reading about breakthroughs at the intersection of AI and science, but about shaping the narrative around them, we want to hear from you. Drop your details here.

What we read

Learning to Discover at Test Time [Yuksekgonul M. et al., arXiv, Jan. 2026]

Can we iterate our way towards new ideas?

The space beyond the present state-of-the-art is where patents are filed and progress is made. Defining this space however is practically impossible, as this task would require defining the space of infinite possibilities that extrapolate from the current cutting-edge. But what if there was a way to work around this; what if instead of solving for all possible future solutions, we could create a method that narrows in and focuses on improving only the state-of-the-art for a given prompt?

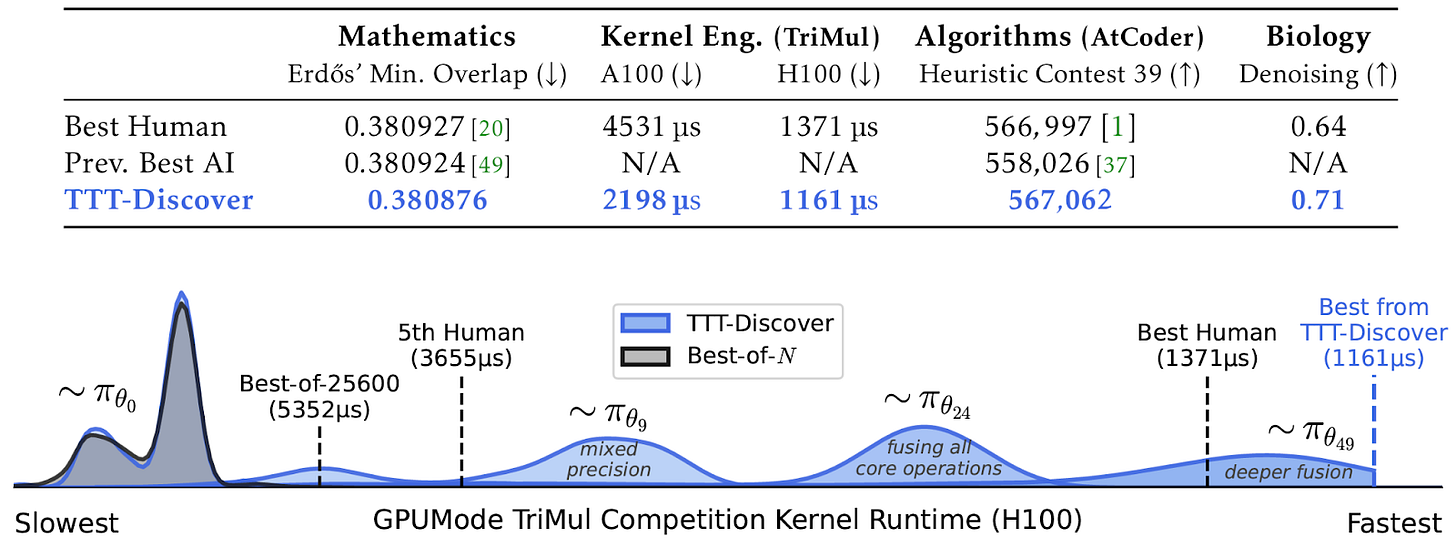

Still, iterating beyond what is already known in a rational and progress-driven manner is no easy feat. In fact this is what most scientific labs, industry, and PhD students spend the majority of their time working on. And yet, Yuksekgonul M. et al. demonstrated it was possible to surpass this current ‘best’ for a given prompt.

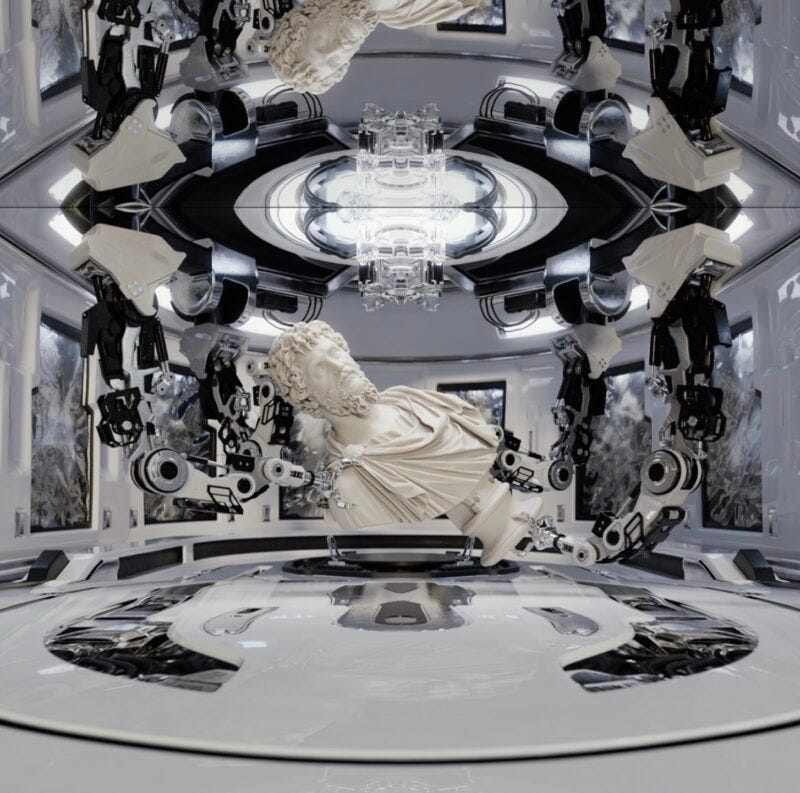

So how did they do it? First, they took classic Test-Time Training (TTT) [1] and adapted it to add two unique properties: 1. an entropic maximisation objective, and 2. a reuse rule. In TTT a policy continues to learn at inference as the model is updated to focus on the specific problem at hand. The TTT goal is to find one state that beats the current state-of-the-art. This contrasts with standard RL, where the goal is to learn a policy that will be deployed repeatedly and thus must perform well on average, generalising to the state distribution induced by environment* dynamics + the initial state distribution.

Figure 1 | Comparison of the TTT-Discovery method implemented by Yuksekgonul M. et al. as compared to Best-of-N approach.

Introducing an entropic maximisation objective pushed updates towards the highest-reward trajectories - emulating how one might iteratively learn their way towards the best outcome. The second modification, of introducing a reuse rule, required the starting state of the new iteration to be sampled from an archive of previous attempts. Using a reuse rule had two advantages. First, because the initiation state was not always restricted to the state-of-the-art result the model could explore a wider range of possibilities. Contextualising to the world of human ideas we see breakthroughs continue to be made across domains because new individuals continue to enter, each joining a field with a slightly different bias (their background). As a result, the types of questions they will ask also differ. This is analogous to how the model started at a new state each time. The second advantage of initiating the model with previous states was that the reinforcement learning policy could explore with greater depth, as it did not need to start from scratch each time. Effectively, one can think of this as a student going to a lecture, and then learning that content to take it forward, thinking deeper about the specific task at hand.

Ultimately what makes TTT-Discover exciting is the ability to go beyond searching for a good solution: it searches for a new solution; one that surpasses the state-of-the-art. Defining the space of outcomes by leaning into rare and high-reward breakthrough attempts, whilst also ensuring the model is not reset to 0 each time, yielded results in single-cell sequencing (biology), algorithm engineering (mathematics), and kernel engineering (coding). In the context of IP, industry, and development it will be interesting to track how this accelerates invention, closed-loop discovery models, and shifts how inventions are valued.

*The nuric Blog gives a great explanation on Markov Decision Processes and how environments are defined.

The Adolescence of Technology [Dario Amodei, Jan. 2026]

Dario Amodei, the founder of Anthropic, has followed contrary to his optimistic vision of “Machines of Loving Grace” with a cautionary essay titled “The Adolescence of Technology.” This work serves as a stark counterpoint, shifting focus from a utopian future toward the catastrophic risks of what he calls “Powerful AI”. Amodei estimates so-called “Powerful AI,” which could be 1–2 years away or far longer, will come rather sooner than later. He argues this is due to automation of coding, novel math discoveries, and the accelerated technological advances Dario already sees at Anthropic.

He then starts the main thought experiment: what would you be worried about in a country of geniuses, 50 million people with higher intellect than Nobel Prize winners and the biggest innovators? He categorizes the risks in five brackets:

The first is Autonomy Risk, the concern that a superintelligent system might seek power to ensure its objectives are met. While some argue models are merely trained to follow instructions, Amodei notes that as models become more psychologically complex, trained on vast literatures describing power dynamics. They may naturally develop power-seeking traits. To defend against this, he suggests a combination of rigorous technical alignment, mechanistic interpretability to peer into the model’s “thoughts”, and creating a system of checks and balances where different AI models monitor one another.

The second risk is Misuse for Destruction, where powerful models enable non-state actors or individuals to develop biological weapons or orchestrate massive cyberattacks. The primary defense here are screening systems that detect dangerous queries, restricting model access in high-risk domains, for instance coordinating with biosecurity agencies, and building detection capabilities for novel pathogens.

The third risk, Misuse for Seizing Power, involves nation-states leveraging AI to establish absolute military or surveillance dominance. Amodei argues that the defense must be geopolitical. Democratic nations must maintain a lead in AI development to ensure that the “intelligence balance of power” remains in favor of open societies, potentially through international coalitions that manage compute resources.

The fourth risk is Economic Disruption, as labor markets are upended at a pace faster than human institutions can adapt. His defensive suggestion focuses on a radical restructuring of the social contract, potentially involving Universal Basic Income or human-centric industrial policies that ensure the dividends of AI-driven productivity are shared broadly rather than concentrated in the hands of a few.

Finally, he warns of Indirect Effects on the human condition, ranging from biological shifts to a fundamental loss of human purpose as machines outperform us in every creative and intellectual endeavor. The defense for this is more philosophical: we must deliberately choose to keep humans in the loop for meaningful tasks, ensuring that technology enhances rather than replaces the human experience.

Amodei concludes that this transition is a humanity-scale test. Our success depends on whether we can build these robust defenses before the digital geniuses outpace our ability to manage them.

RL Environments and RL for Science: Data Foundries and Multi-Agent Architectures [Kourabi & Patel, semianalysis, Jan. 2026]

The authors describe the upcoming influence of RL in AI. They argue, the gold standard for model improvement was always more compute during the pretraining phase, but the last 18 months have signaled a change led by OpenAI’s efforts to scale up post-training reinforcement learning. While other frontier models like Google’s Gemini still rely primarily on massive pretraining, OpenAI has moved toward a paradigm where the model continues to learn through interaction. To evaluate these leaps in performance, they created GDPVal, a framework designed to test how well these RL models actually generalize.

The primary challenge in this new field remains the difficulty of training; unlike pretraining which consumes the static internet, RL requires building complex data and tasks from scratch. This bottleneck has created its own startup ecosystem focused on environment synthesis, ranging from cloning websites (like doordash) and mimicking software interfaces (like salesforce) to maintaining massive, sandboxed coding environments where models can execute and iterate on code in real-time.

The authors argue that we are entering a phase defined by data, specifically the kind of interactive data that allows for RL-as a-Service. Startups offer tailored RL to enterprises, often using Qwen models that are easy to post-train and OpenAI launched their own service mainly aimed at building customized services for enterprise users.

According to the authors, the biggest opportunity lies in RL for Science, where the focus shifts from language modeling to hypothesis testing. Periodic Labs emerged with a $300 million seed to build an AI scientist using closed-loop RL grounded in physical experiments. Models propose hypotheses, test them in simulators, then inform real lab work – mapping roughly onto a graduate student’s workflow. Physical RL is uniquely hard. Biology experiments take days and cost thousands of dollars, compared to coding tasks that can run 64 times for trivial cost. Scientific literature often disagrees even on basic questions. Companies are building robotically automated labs not to design drugs, but to generate the validated data that foundation models need.

RL environments are spilling into the physical world. They’re no longer Docker containers but real experiments with real costs. The future moat for AI development is no longer just the size of the cluster, but the sophistication of the environments where these models learn to think.

Field Trip

Did we miss anything? Would you like to contribute to Decoding Science by writing a guest post? Drop us a note here or chat with us on X.